In this episode of The Geek in Review, Greg Lambert and Marlene Gebauer welcome back Joel Hron, Chief Technology Officer at Thomson Reuters, for a timely conversation about the shifting relationship among foundation models, legal content providers, legal tech platforms, and the lawyers trying to make sense of the mess. Recent moves by Anthropic, including Claude’s legal practice area tools and MCP connections into legal platforms, raise a larger question for the market. Is a model provider still sitting behind the scenes, or is it starting to become a legal work environment of its own?

Hron explains Thomson Reuters’ commitment to what it calls fiduciary-grade AI, a standard built around trust, verification, transparency, and accountability. For TR, legal AI needs more than a fast answer. It needs systems lawyers trust enough to stand behind. Hron points to Westlaw, Practical Law, KeyCite validity signals, citation ledgers, and verification tools as core ingredients in building AI systems suited for high-stakes professional work. In his view, almost right is not good enough when clients, courts, regulators, and professional obligations sit on the other side of the output.

The conversation turns to how CoCounsel and Westlaw Deep Research use legal content across far more than traditional research tasks. Hron explains that when AI systems gain access to trusted legal content and verification tools, they begin researching throughout the workflow, even while revising contract language or analyzing provisions. He also describes Litigation Document Analyzer, internally nicknamed the BS Detector, a tool designed to review claims in a document and map them to supporting authority, weak support, or no support at all. For lawyers who spend as much time verifying AI output as generating it, tools like these aim to move verification from a manual scavenger hunt into a structured process.

Greg and Marlene also press Hron on Anthropic’s legal plugins, MCP, and the idea of headless legal technology. Hron argues that MCP changes access, not advantage. In his view, the application layer is shifting, but the real competitive value sits in trusted content, expert systems, governance, and domain-specific intelligence. CoCounsel’s user interface represents one expression of TR’s legal agent capabilities, while MCP opens other ways for those capabilities to appear inside broader work environments. Some work will still need a purpose-built legal interface; other work might happen through email, Word, Claude, or another agentic workflow with little visible interface at all.

The episode closes with a larger discussion about what happens when AI starts performing more of the work itself. Hron shares TR’s internal engineering OKR, where more than 50 percent of pull requests should be written by AI, and explains why 51 percent serves as a useful mental model. Once AI performs a controlling share of the work, the human role shifts from doing the task to governing the system. For legal professionals, the same transition is coming. The key question is no longer only whether AI produces useful work. It is whether lawyers have built the systems, context, safeguards, and verification layers needed to trust the work, defend the work, and remain accountable for the work.

Listen on mobile platforms: Apple Podcasts | Spotify | YouTube | Substack

[Special Thanks to Legal Technology Hub for their sponsoring this episode.]

Email: geekinreviewpodcast@gmail.com

Music: Jerry David DeCicca

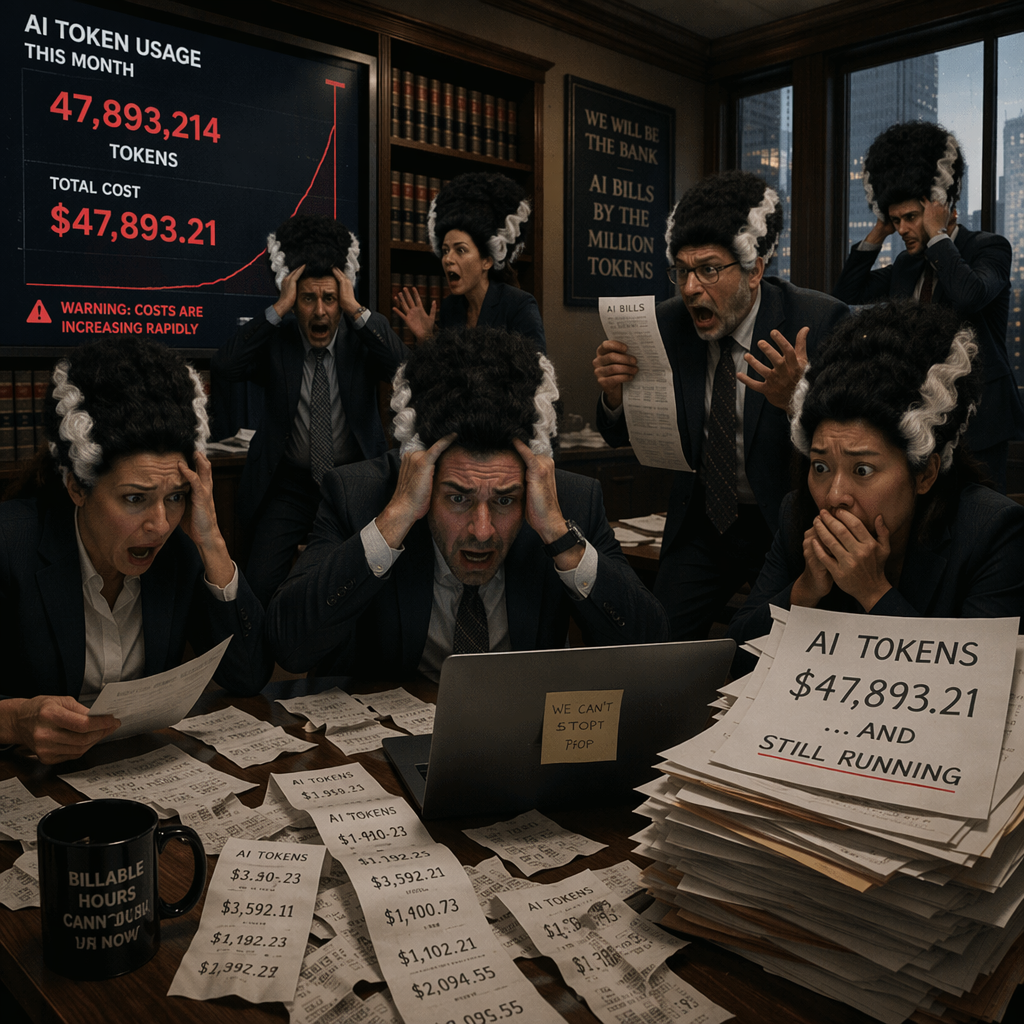

There is a growing chorus of voices in legal AI telling you to be very, very worried about the cost of tokens.

There is a growing chorus of voices in legal AI telling you to be very, very worried about the cost of tokens.