I have a prediction that I want to share with you. This is something that I envision happening just a few short weeks from now. I imagine seeing an associate at a law firm doing something that will make every product manager at Thomson Reuters and LexisNexis choke on their morning coffee. She has a contract dispute question. A Real one. There will be a partner waiting. And the clock is ticking.

She won’t open Westlaw. She won’t open Lexis. She won’t open her browser at all.

She types her question into a work-approved AI chat window. Twenty-four minutes later she has a memo, citations included, sent off to the partner. Entered 0.4 hours on her time entry system. And she is done.

The KeyCite red flag that Thomson Reuters spent generations building? Never saw it. The Shepard’s signal? Didn’t see that either. The annotated treatise hierarchy that some editor in Eagan, Minnesota agonized over? Came through as a flat blob of text in a JSON response that the model summarized into a single sentence.

Don’t get me wrong. Westlaw and Lexis were in the research process. She just didn’t noticed they were there. And that, friends, is the near-future I want to talk about.

I’ve been privately calling this “Shadow UX.” Think of it as the user-experience cousin of Shadow IT. We all know the effects of Shadow IT, right? That was when the marketing team started using Dropbox without telling the IT department, and three years later IT realized the entire company’s roadmap was sitting on someone’s personal account. Shadow UX is the same thing at the interface layer. An unauthorized layer sitting between the user and the vendor’s product, and the vendor doesn’t control it, doesn’t design it, and increasingly doesn’t even know it exists.

For legal information vendors, the Shadow UX layer is mostly an LLM with a few tool calls bolted on. There are other versions out there too: browser extensions that re-skin search results, paralegals building Notion dashboards off APIs, scraping wrappers feeding firm intranets. The AI agent is the one eating everyone’s lunch though.

Here’s why I’ve been thinking about Shadow UX so much lately.

For thirty years vendors competed on the browser/dashboard. The fancy charts. The little visual icons. The hover states. Pixel-perfect interfaces designed for a human eye scanning a screen. In 2026, the user is increasingly something else. It’s a model reading a JSON schema at inference time. If you’ve optimized your product for an audience that’s becoming the minority of your traffic, you’re going to find out the hard way.

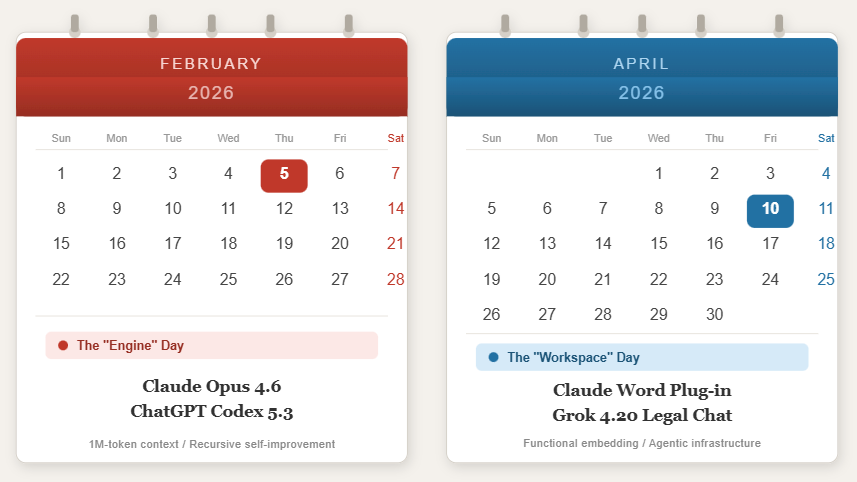

OK so why now? Three things had to happen at the same time, and they all did within about eighteen months.

First, the models actually got good. The 2024 models couldn’t handle jurisdictional nuance. The 2026 models draft memos that pass partner review. Not every time, sure, though often enough that associates are using them anyway.

Next, the billable hour math became impossible to ignore. We bill in six minute increments. Any tool that turns a ninety minute task into nine minutes is going to get used, with or without IT’s blessing. (Sound familiar? Hello again, Shadow IT.)

And Finally, the Model Context Protocol showed up. MCP is the part of this story that doesn’t get enough attention. Imagine if every database, every research platform, every internal wiki spoke a common language to AI agents. That’s MCP. Companies like NetDocuments and Midpage adopted it. Specialized vendors are rolling out MCP servers for everything from patent search to legislative tracking. Once the protocol got standardized, the vendor’s UI stopped being a moat and started being a speed bump.

Now here’s the part that should worry legal information providers. The editorial work that built these companies, the headnotes, the Key Number system, KeyCite, Shepard’s, all of that gets flattened.

In a portal, a KeyCite red flag is loud. It’s red. It’s literally a flag. You see it before you see anything else on the page. In the Shadow UX layer, it’s a token in a JSON field. If the model’s summarization logic doesn’t promote it, the user never sees it. The signal is technically still there. It’s just invisible.

The headnote tree is worse. Editors spent generations nesting these things to show legal relationships. Models hate hierarchies. They flatten them into bullet lists, or worse, into prose. The categorical context disappears.

And then there’s the provenance problem, which is the one that actually worries me. When an agent synthesizes ten cases into one paragraph, the user gets a confident narrative. They don’t see that eight came from KeyCite-validated sources and two came from a sketchy public database the model decided to trust. The vendor’s brand was always the proxy for “this is reliable.” When the brand is invisible, the proxy is gone.

I’ll put it bluntly. If you’re a research vendor, your brand value is currently being laundered through someone else’s chat interface, and you’re not getting credit for it.

The pricing model is the other shoe about to drop.

Seat-based pricing is the deal we’ve all lived with since the 90s. You pay per lawyer. The lawyer logs in. Everybody understands. Now… an AI agent doesn’t log in. It doesn’t have a seat. It can do the work of fifteen associates in an afternoon though. So vendors are watching seat counts flatten while their compute costs spike. The infrastructure bill goes up while the revenue line goes sideways. That’s not a sustainable shape.

The industry is wobbling toward usage-based and outcome-based pricing. Pay per query. Pay per resolved research task. Pay per drafted clause. Salesforce and Zendesk are already doing this in their own categories. The math makes sense for vendors. The problem is that law firms hate metered bills. CIOs cite cost forecasting as the number one headache with consumption pricing. Nobody wants their Westlaw bill to look like an AWS invoice.

Here’s where the real fight is going to happen, and I haven’t seen anybody talk about it openly yet.

Put yourself in the chair of a Westlaw or Lexis sales VP. You’re watching seat utilization drop. Associates are logging in less. Partners barely log in at all. The minutes-per-seat metric you’ve been using internally to justify renewals is collapsing. Meanwhile your compute costs are spiking because the firm’s MCP-connected agents are hammering your APIs and MCPs at three in the morning to draft research memos.

What do you do?

I’ll tell you what you do. You add an AI agent access fee on top of the seat license. Premium tier. “Enterprise agentic access.” Whatever the marketing team lands on. And you keep raising the per-seat price every renewal cycle. Because if each seat is getting cheaper for the firm to actually use, your only path to flat or growing revenue is to charge more for each one. Double dip. Seats plus agents. Stack them.

Now flip the chair. You’re a firm CIO or a law firm library director. Your usage data shows seat logins dropping. Your associates are no longer going directly to Westlaw or Lexis. The vendor calls to renew, the price per seat is up 10%, and now there’s a separate line item for “agent access” that wasn’t on last year’s quote. You ask why you’re paying more for less. The vendor explains, with a straight face, that the value sits in the data, the agent extracts more value per query, and the bill reflects that. You disagree.

That’s the battle.

Continue Reading Shadow UX and the Upcoming Fight over Legal Research