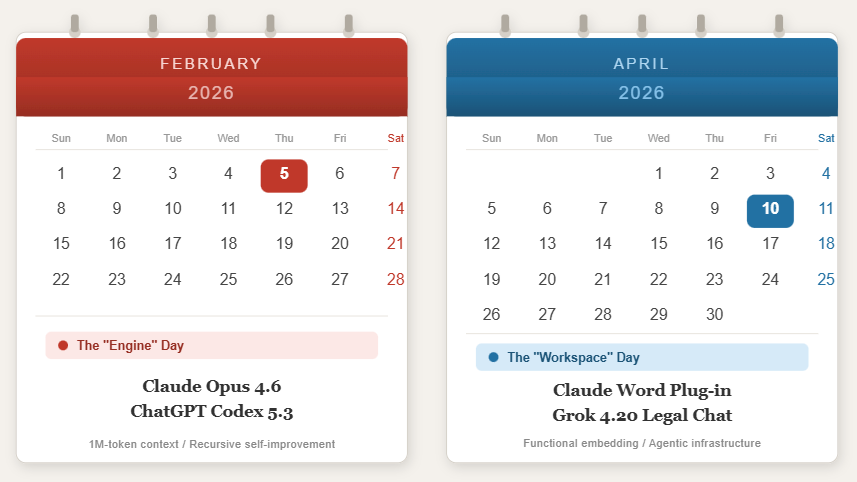

I’ll be the first to admit it. Back on February 5th, when Anthropic dropped Claude Opus 4.6 and OpenAI fired back with GPT-5.3 Codex on the same day, I was right there geeking out with everyone else. A million-token context window! A model that helped build itself! People were calling it the “Kendrick vs. Drake” of the AI world, which… well, okay, I’ll allow it just this one time.

The problem is, February 5th didn’t actually change how very many law firms and their lawyers worked the next morning.

April 10th did.

The Sidebar That Changed Everything

Last Friday, Anthropic released Claude for Word as a public beta. A simple Word add-in. A sidebar.

And I think it might be the most consequential legal tech release so far in 2026.

I know, I know. A sidebar? But think about what it actually solves. Every lawyer I’ve talked to who didn’t have a Harvey or Legora plug-in has been using AI for the past two years has been doing the same awkward dance of copying text out of Word, pasting it into a browser, prompting the AI, copying the response back, manually formatting it, hope nothing breaks along the way. It’s the AI equivalent of printing out an email so you can fax it to someone.

The Claude Word add-in kills that workflow dead. You prompt Claude right there in the document. It returns edits as tracked changes. Actual tracked changes, in the review pane, with the formatting intact. Your multilevel numbering doesn’t explode. Your defined terms survive. Cross-references stay put.

For transactional lawyers, it goes even further. A single Claude conversation can span your Word purchase agreement, your Excel valuation model, and your PowerPoint deal summary. You can ask it to check whether the narrative in your financial report matches the underlying data without leaving the document you’re working in.

Is it perfect? No. It’s beta. Anthropic themselves have warned against using it for final legal filings without human oversight. And the security folks are rightly flagging the risk of prompt injection attacks embedded in external documents, something that should make every information governance person sit up straight.

But the direction is unmistakable. AI just moved from the browser tab to the document.

Meanwhile, Musk Brought a Debate Team

On the same Friday, xAI released Grok 4.20, and the architecture is genuinely interesting. Instead of one model trying to be right, Grok runs four specialized agents (a coordinator, a researcher, a logician, and a creator) that essentially argue with each other until they reach consensus.

The result? A 22% hallucination rate. The lowest ever measured by Artificial Analysis. For an industry where hallucinated case citations have gotten lawyers sanctioned by federal judges, that number matters.

But here’s where it gets weird. Reports surfaced that banks and law firms working on the upcoming SpaceX IPO (we’re talking JPMorgan, Goldman Sachs) have been required to subscribe to Grok. Not recommended. Required.

So now we have a world where your legal tech stack isn’t just something you choose. It’s something that gets mandated as part of the deal ecosystem. That should make everyone uncomfortable, regardless of how you feel about the underlying technology. I guess since no law firm or corporation AI strategy leaders have ever seriously said, “You know what we really want to build our AI strategy on? Grok Enterprise.” there has to be some incentives??

The “SaaSpocalypse” and Who Actually Survives

You can’t understand April 10th without backing up to February 3rd, when the announcement of Claude’s legal plugins triggered what the market delightfully called the “SaaSpocalypse.” A $285 billion single-day selloff in SaaS stocks. Investors panicked. If Claude can do contract review natively inside Word, what happens to all the legal tech companies whose entire value proposition is… putting a UI on Claude?

By April 10th, the dust had settled and the answer was becoming clearer. Ron Friedmann and the Gartner team noted (and just for fun, I did too) that Anthropic’s plugin wasn’t really a threat to companies like LexisNexis or Thomson Reuters. Those vendors have decades of curated case law and proprietary data that no foundation model can replicate overnight. They slept fine Friday night.

The companies that should be worried? The ones whose moat was “we integrated an LLM.” Because that integration moat just evaporated. The Model Context Protocol (MCP) is standardizing how AI connects to databases like iManage and Clio, and it’s doing to legal tech wrappers what USB-C did to proprietary chargers. If you’re a workflow platform like DISCO or Consilio, you’ve got some runway, but you’d better be “platformizing” fast. And if your company’s entire pitch was “we put a nice interface on Claude”… well, I hope the resumes are updated. Anthropic just showed up in your lane and they’re not charging a markup.

I keep coming back to what Stephane Boghossian nailed: “Prompting isn’t a product.” That’s the harsh truth of April 10th. The thin-wrapper era is over.

The Regulators Apparently Got the Memo

If it had just been the Claude and Grok releases, April 10th would have been a busy Friday. But the regulators decided this was their day to show up too.

The White House dropped a National Policy Framework for AI that proposes preempting state-level AI development regulations. That’s a direct shot at California’s SB 53 and Colorado’s SB 24-205. The framework includes a “Right to Compute” provision that would prevent states from banning AI-assisted legal research or drafting. For our world, that’s significant.

Speaking of Colorado, Elon Musk’s xAI filed a 75-page federal lawsuit (xAI v. Weiser) to block Colorado’s algorithmic bias law, arguing it would force Grok to promote a “State-enforced orthodoxy.” Now, I find the idea that an AI model has a “disinterested pursuit of truth” that deserves First Amendment protection to be… let’s say a stretch. But Musk’s lawyers aren’t dumb, and the underlying question (can a state force an AI model to produce certain kinds of outputs?) is one that’s going to matter a lot more in two years than it does today. Keep your eye on this one.

And in what might be the most practically useful development of the day, the Third Circuit ruled that legal tech startup UpCodes’ publication of copyrighted building standards online likely constitutes fair use. If that holds, it has real implications for every AI-driven legal research tool that needs to ingest government-published standards.

“Trust, Not Technology, Is the Constraint”

Here’s where I want to get practical, because I think the industry conversation has gotten stuck on the wrong question.

Everyone keeps asking: “Which model is best?” The benchmark tables are everywhere. Gemini 3.1 Pro and GPT-5.4 are tied for first on the intelligence index. Claude Opus 4.6 leads in coding. Grok 4.20 leads in hallucination reduction. It’s all very interesting and almost entirely beside the point for most working lawyers.

The real question is: do your people trust it enough to use it?

Wolters Kluwer’s research shows 54% of clients now expect their legal partners to be AI-competent. Not “exploring AI.” Competent. They’re asking sharper questions about turnaround times and whether those times reflect an AI-accelerated workflow.

Industry leaders like Mark Brennan and Nicole Stone have it right: “Trust, not technology, is the constraint.” February 5th gave us the raw capability. April 10th started building the infrastructure to make that capability usable.

And I’ll tell you what I’m watching most closely: the emergence of law librarians and knowledge managers as the critical players in this transition. The people who have spent their careers managing information, evaluating sources, and training professionals to use complex research tools? They’re exactly the people firms need right now. Not sitting at reference desks but operating from what some are calling “Genius Bar” environments, helping attorneys learn to work with AI responsibly.

The more things change, right?

Was April 10th Really That Important?

I’m going to say yes.

February 5th showed us what AI could do. It was impressive. It was exciting. And for most working lawyers, it was abstract. April 10th showed us how lawyers would actually work. Claude moved into the Word sidebar. The White House started drawing regulatory lines. The courts weighed in on fair use. The market sorted out which legal tech companies have real value, and which were just wrapping someone else’s model in a nicer box.

February 5th was the day we all said “wow.” April 10th was the day somebody said “okay, but where does this actually go in my workflow?” And then it showed up in Word. Right there in the sidebar. Like it had always been there.

So here’s what I want to know: when the AI is no longer a separate step, when it’s just in the document, invisible, ambient… who at your firm owns the quality control? Is it the knowledge management and library professionals who have spent twenty years figuring out how to evaluate information sources and train people to use them responsibly? Or is that still being treated as an afterthought?